Last week I posted Part One of a two-parter based on Karen Field’s recent article, “10 Skills Embedded Engineers Need Now”, which was published on Embedded.com.

Matt Liberty thinks engineers should get out of their comfort zones, with special emphasis – never bad advice to engineers – on keeping a good balance between the interpersonal and engineering skills. Jean LaBrosse wants engineers to “become skilled at expressing yourself” (both in words and graphics). No, engineers don’t need to become artists. But they “should have as a fundamental skill the ability to use block diagrams, state machine diagrams, pictures or clouds or light boxes or whatever tool can aid in conveying concepts. Particularly if they are trying to explain how something works.”

These overlapping points are excellent. I’ll go so far as to say that I’ll give a little bit on engineering talent to have someone on our team that can communicate well and has great interpersonal skills. Engineering prowess is not everything. As Jean LaBrosse says, you need to be able to communicate effectively to be successful.

Getting some RTOS experience is what Henry Wintz finds important, given the high demand for it (and the salary premium placed on it). “Given that at any given point the CPU can be called to run a different task,” engineers with RTOS under their belt “know how to make sure that the resource they are currently using is not going to be trampled on. In short, they know how to protect resources from other tasks using the service unexpectedly, while maintaining performance.”

I go along with the critical regions comment above, though at Critical Link we do see the shift to much more embedded Linux and away from big RTOS’s.

Jen Costillos says to diversify your skills. Those working barebones might want to take a Linux driver class; conversely those working on large systems might want to try their hand at working barebones. She also advises “moving up the stack: Make a mobile app or learn some back-end server stuff. It will give you a new vocabulary and perspective.” She’s also a proponent of using off-the-shelf boards because it will allow an engineer “to focus on the hard, unique stuff.” This is certainly a position that Critical Link has been advocating for years. So ‘hear, hear.’

Software knowledge, even beyond C and C++, is important, but Elecia White thinks that “the newest trendy language is not as important as the newest, trendy processor technology….that’s just the nature of embedded.” (While we’re not so focused on “trendy” as we are on what makes sense for the industrial-strength apps our customers develop, I’ll give another ‘hear, hear’ for the importance of processor knowledge for embedded engineers.)

Developing a systems engineering mindset is certainly something that we encourage at Critical Link, as does Adam Taylor, who writes, “I have seen a number of projects suffer because things like a clear defined requirement baseline, verification strategy and a plan for demonstrating compliance was not considered early enough in the project.”

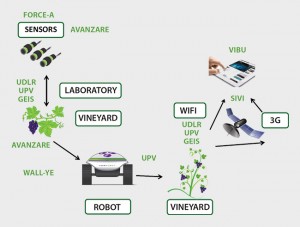

The final piece of advice in the article came from Chris Svec, and that’s “learn wireless connectivity…specifically wifi and/or Bluetooth low energy (BLE). Given the growth of the Internet of Things, on both the consumer and industrial side, I’d say that this is pretty good advice.

With technology advancing so rapidly, it’s sound advice to embedded engineers to keep learning about the technical advances that will definitely help them advance their careers.