It’s summer, so we expect it to be hot. Just not as hot as it’s been around the country of late. Even up here in the north country, where we’re more used to extreme weather winters than extreme weather summers, it’s been too darn hot.

And so, we do whatever we can to stay cool.

If we’re fortunate enough to afford it, we sleep with the window air conditioner humming away in the bedroom. When we wake up in the morning, we hop in our car and rev up the air conditioning for the drive to our AC’d office.

If you live and work in Syracuse, that drive to work may well take you through Carrier Circle, passing by the massive Carrier facilities where for many years, air conditioners were manufactured. Although they’re no longer building AC ’s in Syracuse, Carrier remains the world’s largest producer of air conditioning units. And it was Carrier that largely made possible the expansion of the use of air conditioning, which continues to grow worldwide. As the planet heats up, demand for AC will continue to increase.

’s in Syracuse, Carrier remains the world’s largest producer of air conditioning units. And it was Carrier that largely made possible the expansion of the use of air conditioning, which continues to grow worldwide. As the planet heats up, demand for AC will continue to increase.

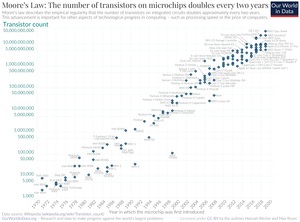

It’s been estimated that, by 2050, there will be 4.5 billion air conditioning units in place around the world, and they’ll be responsible for consuming 13 percent or so of all electricity. There’s a feedback loop in play here: the more air conditioning used, the more its use will contribute to climate change – with the higher temperatures and longer heat weave that will bring – which in turn will result in accelerated demand for air conditioning. All this to ponder while taking into consideration that the fundamental technology used in air conditioning hasn’t changed all that much since the 1940’s, when AC’s popularity began really taking off.

But there are a number of new approaches to air conditioning in the works, and I recently came across a roundup of some of the emerging ideas in an article by Robin Fearon that appeared on Discovery.com.

Some of the suggestions aren’t particularly reliant on sophisticated technology. Plugging air leaks in buildings, greater use of insulation, and covering the roof areas of buildings with white reflective materials will result in a lot less energy use, saving both money and the environment.

The vast majority of American households have AC units. Today’s air conditioners are more energy-efficient than they were in the past but, Fearon asks, “what if each unit captured carbon dioxide from the air and converted it into renewable hydrocarbon fuels?” The hydrogen extracted from carbon dioxide and water from the air could be turned into liquid synthetic oil: carbon-free energy!

Energy-efficiency could be improved with “hybrid technologies that transfer heat between semiconductors or use magnetic fields.” Performance would be increased by a factor of 4 or 5.

There are a number of ideas being explored for reducing, or getting rid of entirely, hydrofluorocarbon refrigerant use in AC’s.

Alternatives include using low-cost solid-state non-toxic ‘plastic crystals’ and a moisture storage battery assembly that dries the air first, making it easier to cool.

Another idea being considered uses a bult-in solar panel to power a unit’s compressor and heat exchanger.

Alternative cooling technologies such as ground source and dual-source heat pumps that draw on ground and air temperature exchange to cool buildings are also increasing in popularity. In winter they warm buildings using ground heat and in summer they pump heat out of buildings into the ground and surrounding air.

Grid technologies are also getting cleaner and smarter, with more use of renewable sources of energy coming to the fore. Within buildings, improved control systems and sensors are being deployed to minimize energy need. (E.g., if there’s no one working in an area, there’s no need to keep that area cool.)

What else is in the works?

Penn State has a thermoacoustic chiller “which uses soundwaves and helium to lower temperatures.” Then there are:

…mirrors that simply bounce heat radiation out into the cold reaches of space, reducing the energy needed to cool structures by up to 70 percent.

There’s a lot happening that will make it possible for us to keep cool without making environmental conditions any worse.

Meanwhile, for Syracuse folks and/or fans of Syracuse University basketball, the Carrier Dome, which for more than 40 years was the site of SU basketball (and football and lacrosse) games, has acquired a new name. It’s now the JMA Wireless Dome. The Dome has recently been refurbished and now, for the first time, it’s got air conditioning. (From Carrier, I assume…)

sodium is in fact an essential element of a healthy diet, required for a number of physiological processes, including electrolyte balance, when it comes to salt, too much of a good thing can be a bad thing. Overdoing it on salt can lead to high blood pressure, heart disease, and stroke.

sodium is in fact an essential element of a healthy diet, required for a number of physiological processes, including electrolyte balance, when it comes to salt, too much of a good thing can be a bad thing. Overdoing it on salt can lead to high blood pressure, heart disease, and stroke.